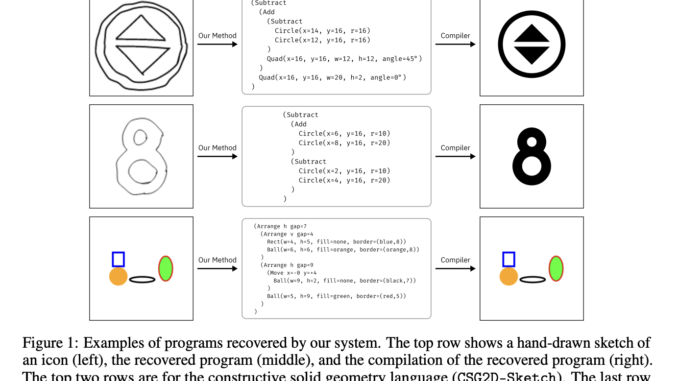

Researchers at UC Berkeley Propose a Neural Diffusion Model that Operates on Syntax Trees for Program Synthesis

Large language models (LLMs) have revolutionized code generation, but their autoregressive nature poses a significant challenge. These models generate code token by token, without access to the program’s runtime output from the previously generated tokens. […]