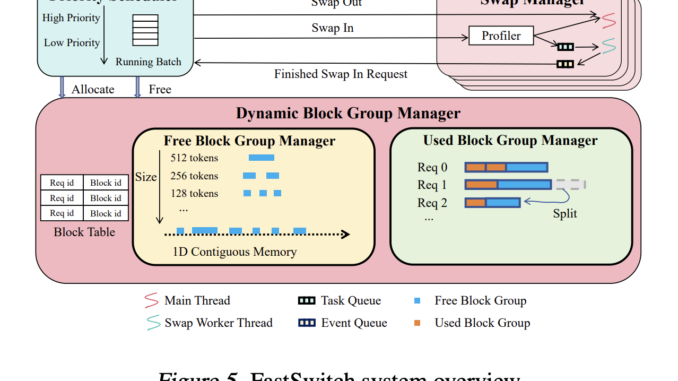

FastSwitch: A Breakthrough in Handling Complex LLM Workloads with Enhanced Token Generation and Priority-Based Resource Management

Large language models (LLMs) have transformed AI applications, powering tasks like language translation, virtual assistants, and code generation. These models rely on resource-intensive infrastructure, particularly GPUs with high-bandwidth memory, to manage their computational demands. However, […]